Claude’s Mythos Model

The Claude Mythos model, rumored to be so powerful that it cannot be publicly released, has sparked speculation about its potential use of technology from Byte’s Seed team. This speculation has quickly risen to trending status.

The Mythos model has stimulated imaginations regarding the next generation of LLM architectures. The community is actively discussing whether it employs a Looped Language Model (Looped LM) architecture, a concept derived from a paper co-authored by the Byte Seed team and several universities, including contributions from Yoshua Bengio.

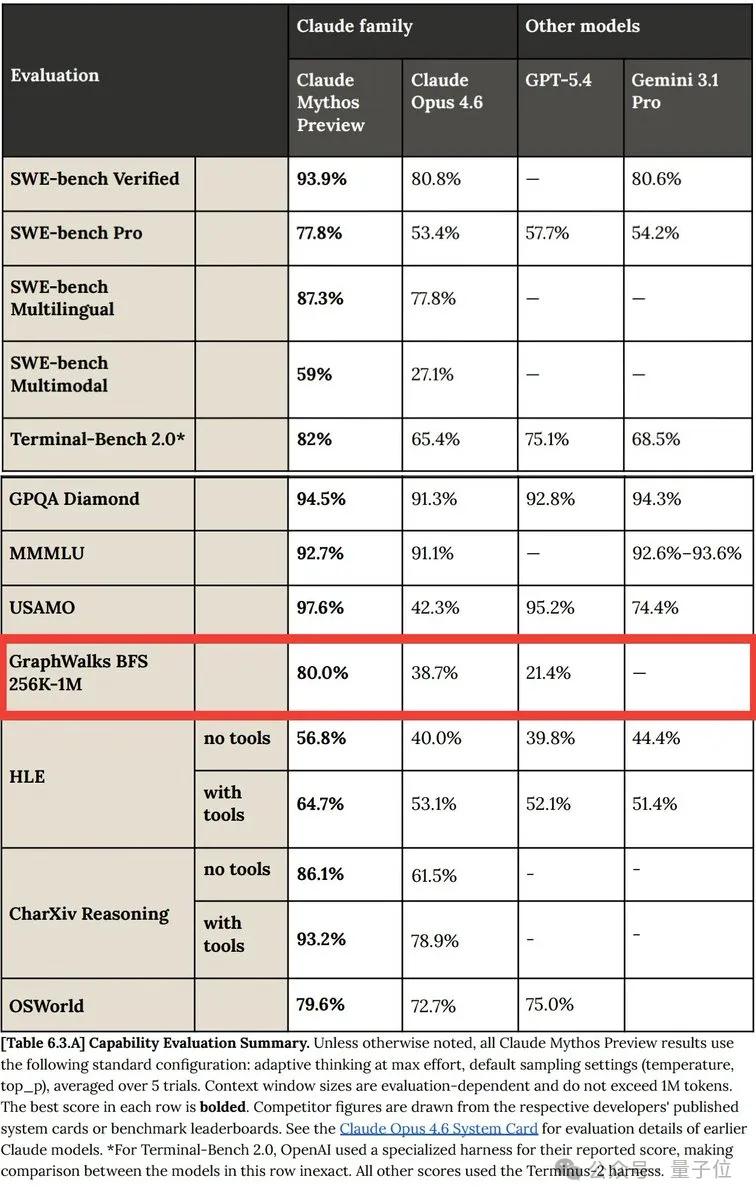

Key clues lie in a set of test data released by Anthropic. The Byte paper indicates that graph search is one of the fields where the looped algorithm has a significant theoretical advantage over standard RLVR.

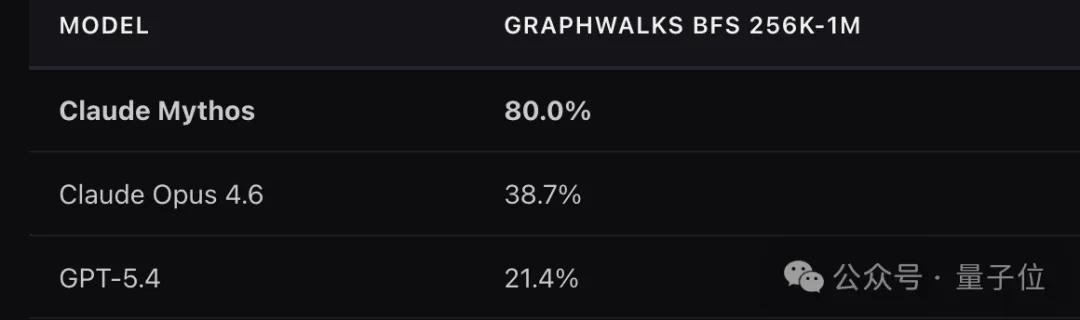

In the case of Mythos, it has notably outperformed its competitor GPT5.4 in the breadth-first search GraphWalks test, achieving scores of 80% compared to GPT5.4’s 21.4%, nearly a fourfold difference.

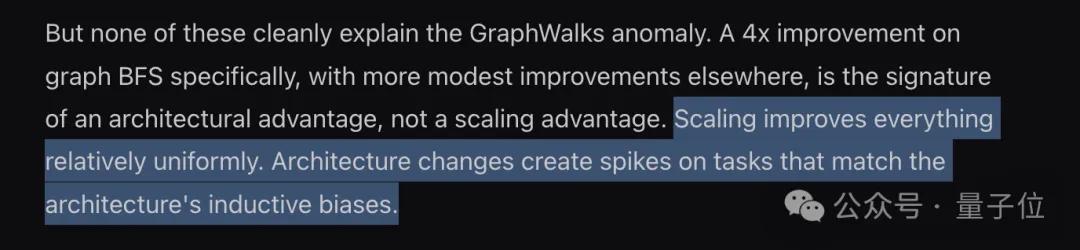

This anomaly in performance suggests that this advancement is likely not due to general Scaling Laws but rather architectural innovation.

Looped Language Model: Small Models Outperforming Large Models

The GraphWalks BFS test involves giving the model a complex graph structure to perform breadth-first search, starting from a point and layer by layer visiting all adjacent nodes. Standard Transformers can only process such problems in a single forward pass without the concept of iteration.

Mythos achieving an 80% score in graph traversal indicates that it likely engages in repeated calculations, processing the same set of information multiple times.

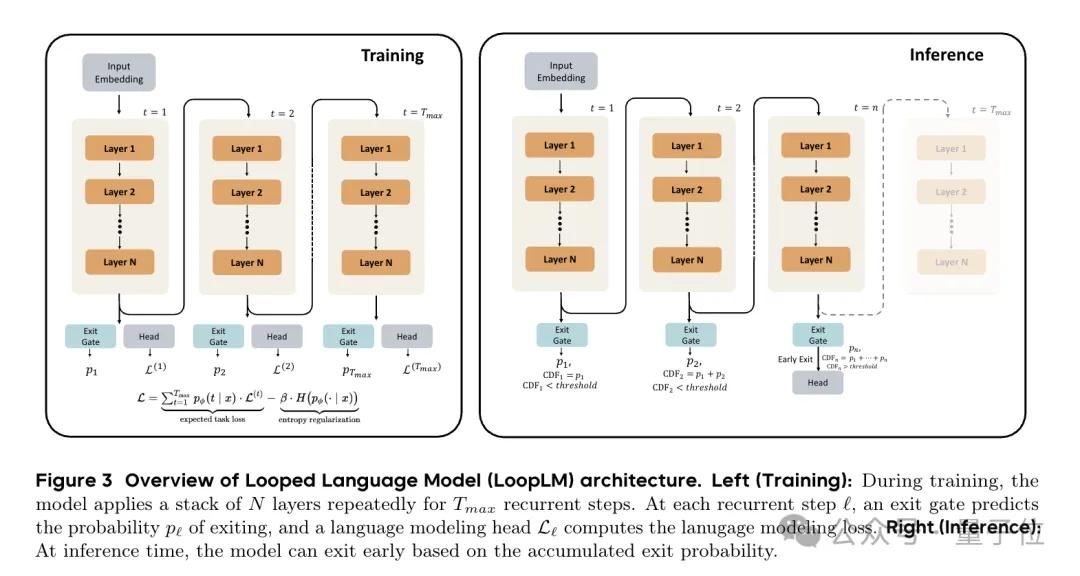

What kind of architecture enables this repeated calculation? The Byte Seed team proposed the Looped LM architecture in their paper.

In summary, LoopLM has three characteristics:

- No lengthy writing; iterates in the model’s latent space without outputting more tokens.

- Fewer steps for simple questions, more steps for difficult ones, with automatic adjustment.

- Learns how to think in latent space during pre-training, rather than just predicting the next token.

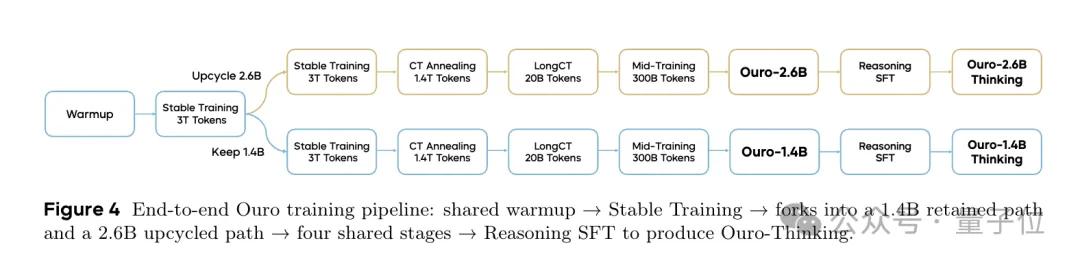

In experiments, the team trained the Ouro series of looped language models, which incorporate this iterative thinking.

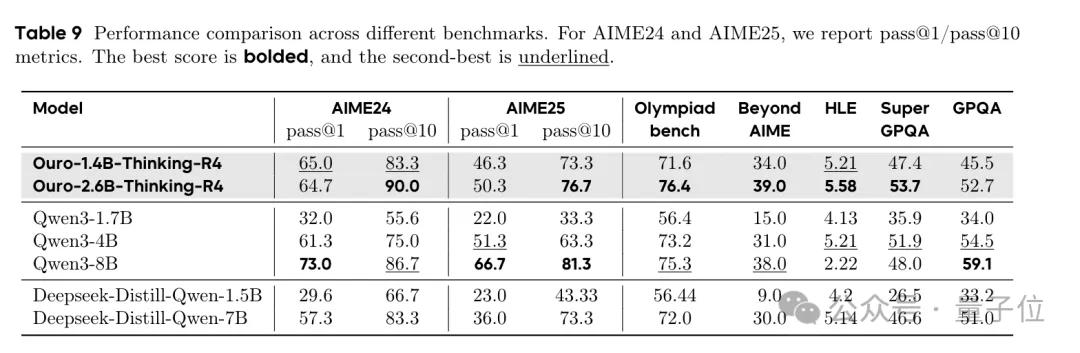

Test results show that the 1.4B Ouro model performs comparably to a traditional 4B model, while the 2.8B Ouro model is equivalent to a traditional 8B-12B model.

As for the source of the performance enhancement in looped models, the paper analyzes the difference between Knowledge Storage vs. Knowledge Manipulation.

Knowledge Storage has a limited capacity, approximately 2 bits per parameter, which remains constant regardless of the architecture used. Looping does not allow for more information retention.

However, Knowledge Manipulation differs; it enables the combination of known facts for multi-hop reasoning, executing programs, and searching graph structures, with capabilities increasing exponentially with the number of loop iterations and training tokens.

In other words, the looped model does not provide AI with a larger knowledge base but significantly enhances its search and combination abilities within that knowledge base.

Three Clues Pointing to a Looped Model Architecture

Several clues suggest that Mythos may indeed be based on a looped model architecture.

The first clue is the breadth-first graph search test results. Mythos not only scores four times higher than GPT5.4 but also shows an unusually large improvement over its predecessor Opus.

The second clue is that Anthropic reports Mythos uses one-fifth the number of tokens per task compared to Opus4.6, but is slower. (It is also five times more expensive!) This is difficult to explain within a standard Transformer framework; fewer tokens should lead to faster generation steps.

However, the looped model conveniently explains this contradiction: reasoning occurs not at the token level but in latent space, with computational effort spent in unseen areas.

The third clue is Mythos’s exceptional performance in cybersecurity. It scored 83.1% on the CyberGym test, while Opus4.6 scored 66.6%, leading by nearly 17 percentage points. It also identified thousands of zero-day vulnerabilities, leaving no mainstream operating systems or browsers unscathed.

The essence of vulnerability discovery is traversing control flow graphs, identifying a path from input to dangerous functions, which is a reachability problem in graphs. Again, this is graph traversal, a natural strength of looped architectures.

While all of this remains speculation, Anthropic has not disclosed any information about the Mythos architecture and may never do so. However, one thought is worth considering:

Scaling Laws improve everything uniformly, while architectural innovations create exceptional peak values for tasks that match their inductive biases.

The inductive bias of looped Transformers is iterative graph algorithms. Mythos’s exceptional peak performance occurs precisely in graph traversal tasks. Anthropic may not say it, but the test data speaks for itself.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.