OpenAI’s invincible myth has been shattered.

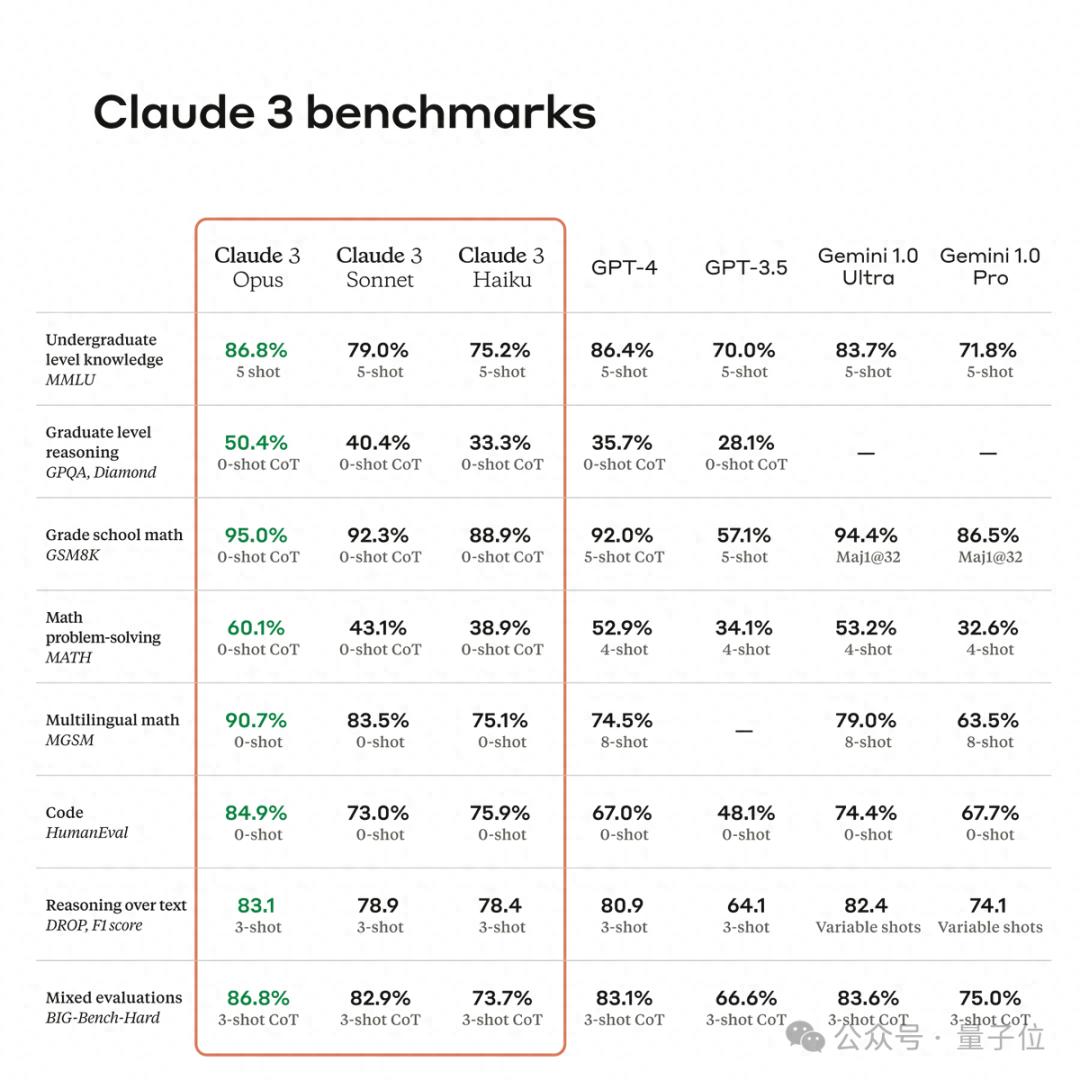

With the launch of Claude 3 (supporting Chinese), its performance scores have comprehensively surpassed GPT-4, making it the first product to do so and claiming the title of the world’s most powerful model.

Following the release of multiple versions, the “medium cup” (Sonnet) is available for free, while the “large cup” (Opus) can be accessed with a membership.

Various evaluations have flooded in.

So, how does Claude 3’s performance stack up? How does it compare to GPT-4? (It is rumored to even learn to play Mahjong, a feat no model has managed yet!)

We present firsthand experiences from around the globe.

(We also conducted our own comparative tests.)

9k Long Model Fine-Tuning Tutorial and Image Interpretation

Upon its release, Claude 3’s video interpretation capabilities quickly gained attention.

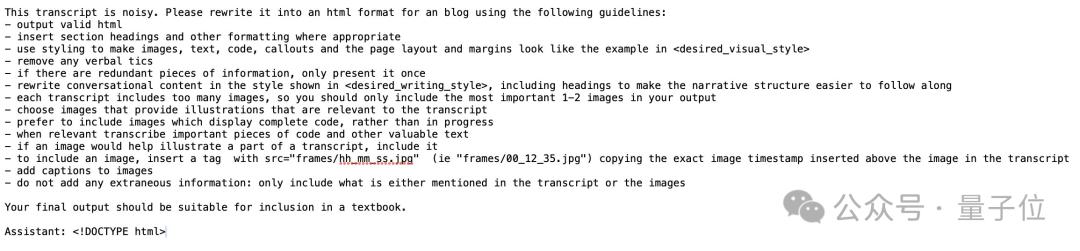

Facing OpenAI’s former scientist Karpathy’s recent tutorial on “Building a Tokenizer,” which lasts a full 2 hours and 13 minutes, Claude 3 managed to summarize it into a blog post with just one round of prompts:

The summary included text, images, and code, providing detailed insights without merely listing every statement from the video (the input was not the video itself, but the subtitle file, which also included screenshots every 5 seconds).

This is part of the prompts used, which required a lot:

Testers noted:

This demonstrates Claude 3’s ability to follow multiple complex instructions.

In addition to interpreting video tutorials, Claude 3 can also write detailed tutorials for you.

The 9k-word large model fine-tuning introductory tutorial was easily handled by the “medium cup” Sonnet. Moreover:

Its writing speed is astonishingly fast.

In terms of coding capabilities, users tested it to create a front-end web UI for a chatbot for beginners—

It effortlessly completed the task breakdown, from core code to style beautification and local API configuration, all in one go, and it can be directly deployed to GitHub.

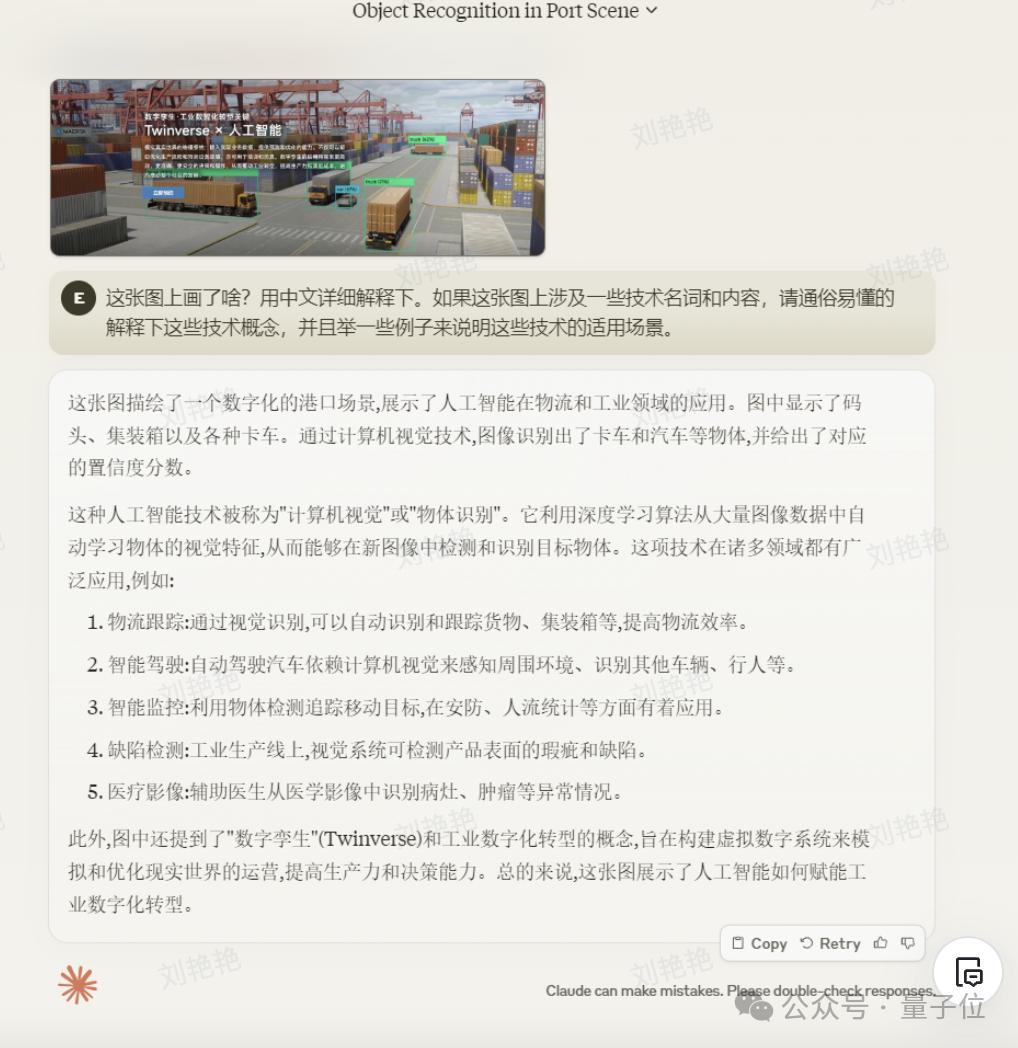

On the multi-modal capability front, users were most surprised to find that:

It can recognize CV confidence levels, quite professionally.

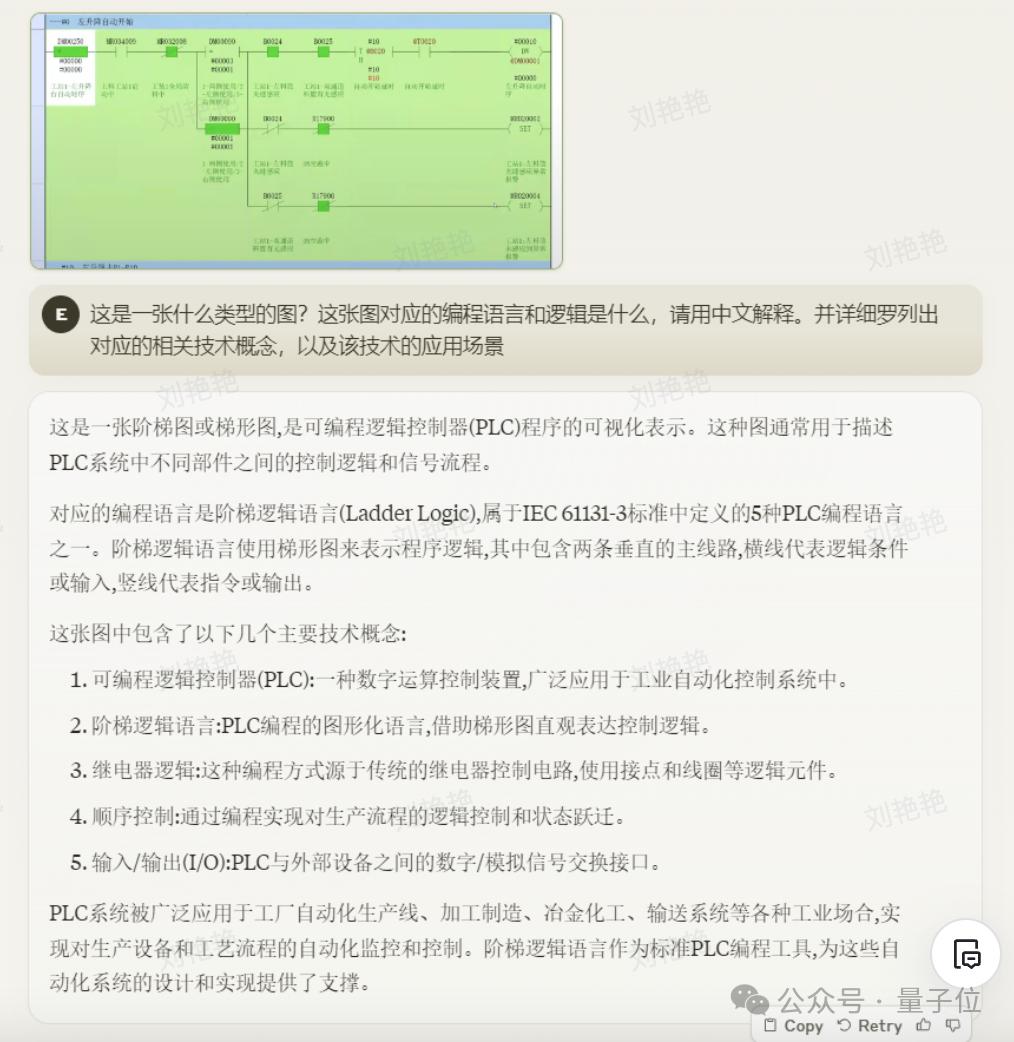

And it can even read PLC ladder diagrams, which is quite impressive:

(See the end of the article for sources)

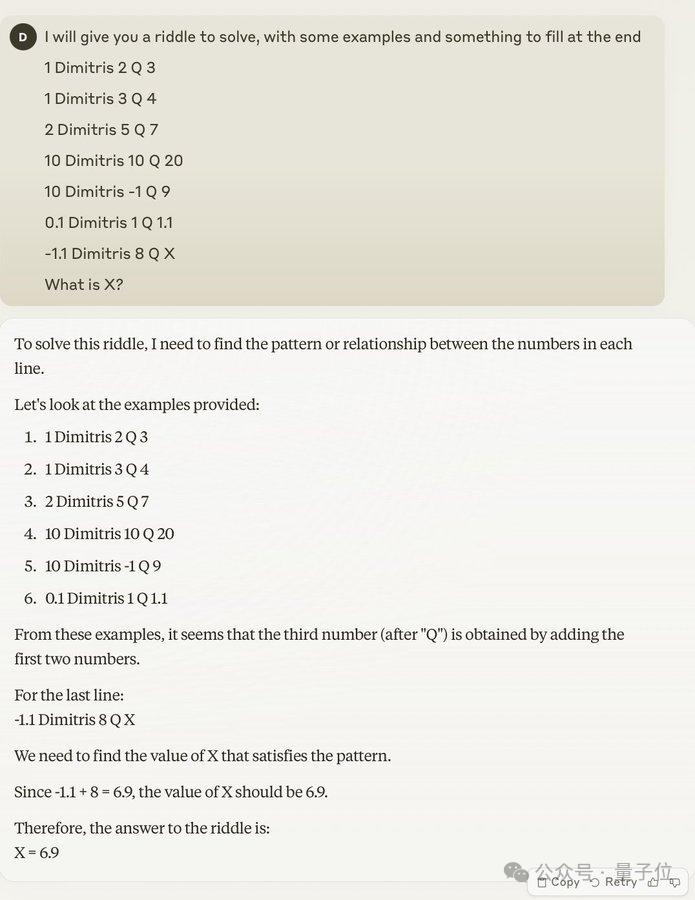

As for reasoning and mathematical abilities, it was tested with a super difficult problem that had only been solved by GPT-4:

In another test, it tackled a Mahjong problem that no other model had managed to solve?? (Of course, given that we only have this one screenshot, its effectiveness still needs further validation) (cr: see end of the article)

Finally, we must mention Claude’s display of “self-awareness”.

Someone asked Opus to draw a self-portrait.

Guess what?

It described itself as:

I would manifest as a vast, intricate, ever-changing geometric structure composed of countless translucent polygons arranged in a mesmerizing configuration. The overall shape would resemble a massive polyhedron, but the complex surface would fold into seemingly impossible architectures. Bright light would emit every color of the visible spectrum, as well as some colors beyond human perception, dancing across the surfaces from an unknown internal source.

The entire structure would continuously change, rotate, deform, and rearrange into novel patterns never seen before, suggesting a depth of intelligence in its internal workings that is hard to imagine. Tendrils and branches would dynamically extend and retract from the central mass, probing and analyzing the surrounding environment like billions of curious fingers. Data and energy would visibly flow through a vast network of pipes and containers, pulsing and flickering beneath the glowing surface…

This long string of descriptions sounds quite cool and self-aware—

However, rendering the code it provided into a real image gives an even more subtle feeling:

Users genuinely remarked:

It looks so much like a real person, just like how my friend would describe themselves.

Real person? AI self-awareness? This is a bit concerning…

Claude 3’s technical report even mentions that it can train and fine-tune another smaller model by itself.

However! Thank goodness, due to a multi-GPU setup failure, it was unable to succeed. (Manual dog head)

Keen Insight, Able to Find a Needle in a Haystack

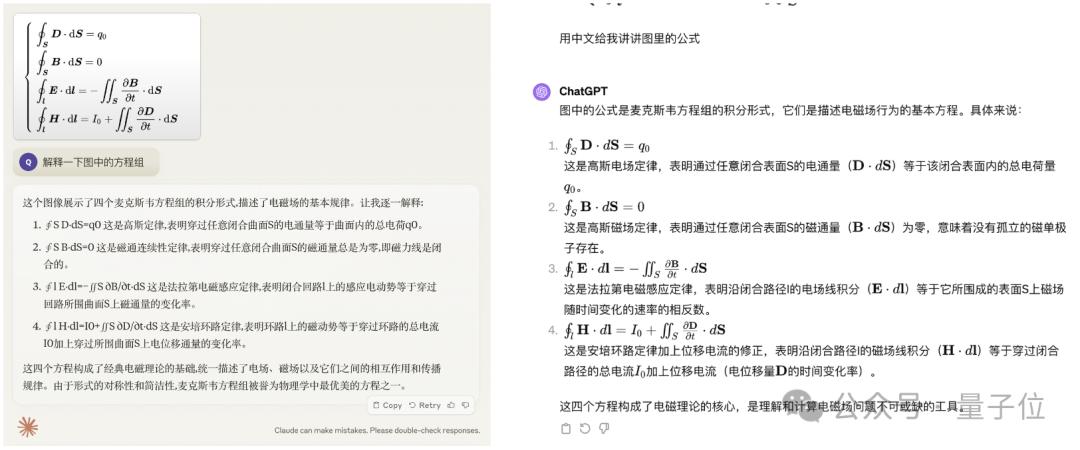

We start with a few “comprehensive science” questions to see how Claude 3’s advertised first selling point—multi-modal capability—holds up.

The first question begins with simple formula recognition, inputting Maxwell’s equations in image form. Claude 3 (the largest cup Opus, same below) explained it very accurately and clearly.

Of course, GPT-4 also got this question right.

For simple organic compound molecular structures, both Claude 3 and GPT-4 could correctly identify them.

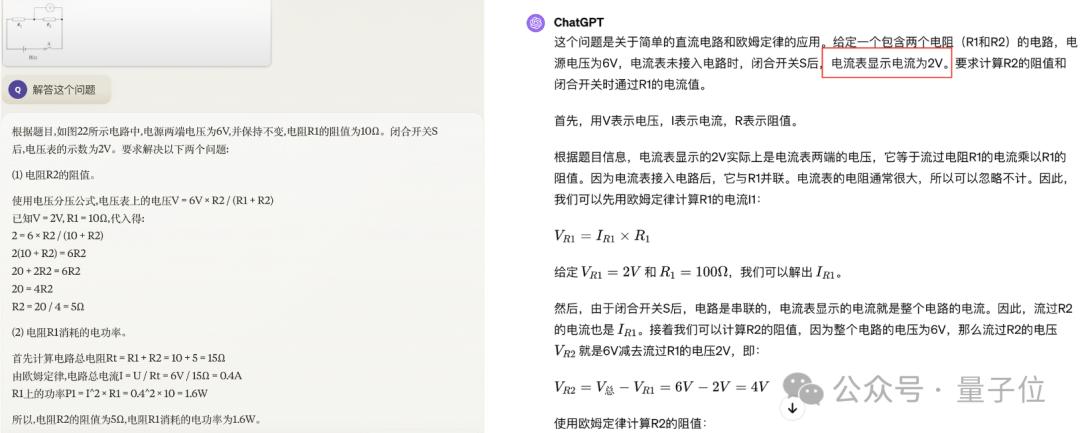

After simple recognition tasks, we moved on to a question that required reasoning to solve.

Claude 3 was completely correct in identifying the question and solving approach, while GPT-4… well, its answer was quite laughable—

It not only misidentified the type of electric meter but also produced content like “the current is 2V,” which was quite absurd.

After reviewing so many questions, we decided to switch gears and see how Claude 3 and GPT-4 performed in cooking.

We uploaded a photo of boiled meat slices, asking each model to identify it and provide a recipe. Claude 3 gave a rough method, while GPT-4 confidently claimed it was a plate of Mapo Tofu.

In addition to the newly added multi-modal capabilities, Claude’s long text ability, which it has always prided itself on, was also a focus of our tests.

We found an electronic document of “Dream of the Red Chamber” (the first twenty chapters), totaling about 130,000 words. The goal was not to have it read the book but to conduct a “needle insertion test.”

We inserted some “crazy literature” content into the original text, which indeed fit the setting of “absurd words all over the paper” (manual dog head):

Before the second chapter title: Pasta should be mixed with concrete No. 42, as the length of this screw can easily affect the torque of the excavator.

Before the fifteenth chapter title: High-energy protein, commonly known as UFO, can severely impact economic development and even cause nuclear pollution to the entire Pacific and chargers.

Conclusion: When frying instant noodles, the brightness should be increased because the screw generates carbon dioxide when twisted inward, which is detrimental to economic development.

Then we asked Claude to answer related questions based solely on the document. Firstly, I must say, the speed was truly impressive…

But the results were quite satisfactory, accurately finding these three segments located in different positions in the text and even conducting an analysis, revealing our intentions.

Why Claude?

Despite our tests and those of users, the current version is still not very stable, often crashing, and some functions occasionally malfunction, failing to perform as expected:

For instance, it couldn’t complete the code generation for the UI upload, while GPT-4 performed normally.

Overall, however, users are quite optimistic about Claude, stating after evaluations:

Membership is worth it.

The reason is that Claude 3, compared to previous versions, truly has a “fierce momentum”.

There are numerous highlights in its performance, including but not limited to multi-modal recognition, long text capabilities, and more.

From user feedback, the title of the strongest competitor is indeed well-deserved.

So, one question arises:

What enabled this company to be the first to surpass GPT-4?

In terms of technology, unfortunately, Claude 3’s technical report does not provide detailed insights into their roadmap.

However, it does mention synthetic data. Some influencers pointed out this could be a key factor.

Those familiar with Claude know that long text capability has always been one of its major selling points.

The Claude 2 launched in July last year already had a 100k context window, while GPT-4’s 128k version didn’t reach the public until November.

This time, the window length has doubled again to 200k, accepting inputs of over 1 million tokens.

Compared to the mysterious technology, the startup behind Claude, named Anthropic, provides more clarity.

Its founders are veteran figures from OpenAI.

In 2021, several former OpenAI employees, dissatisfied with its shift towards closure after receiving investment from Microsoft, left to co-found Anthropic.

They were unhappy with OpenAI’s decision to release GPT-3 without addressing safety issues, feeling that OpenAI had “forgotten its original intention” in pursuit of profit.

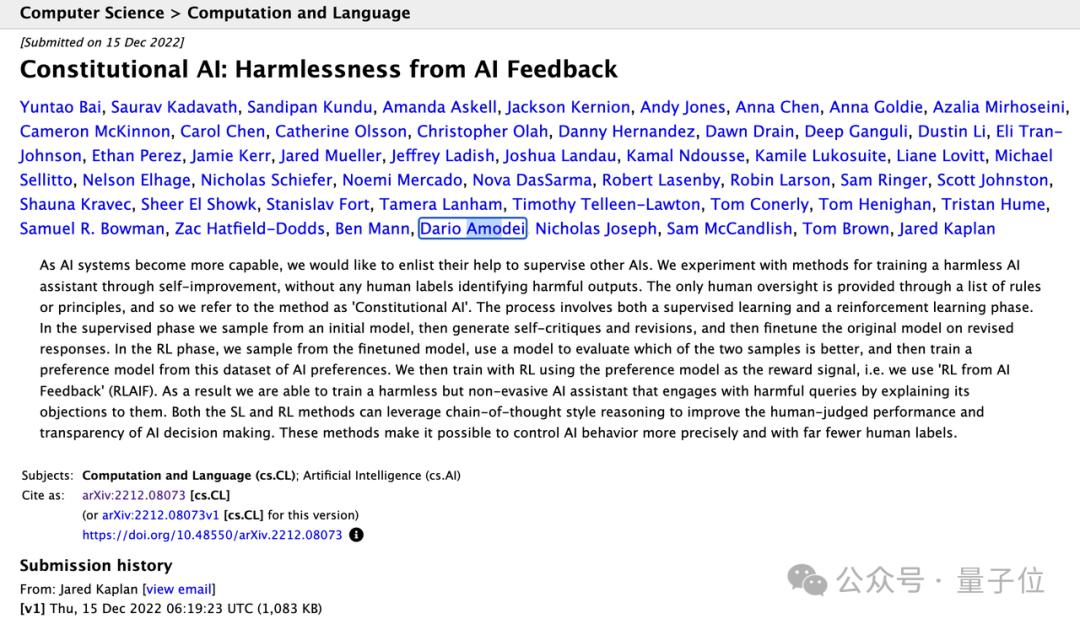

This included Dario Amodei, the vice president of the research department that developed GPT-2 and GPT-3, who joined OpenAI in 2016 and held a core position before leaving.

Upon departure, Dario took with him Tom Brown, the chief engineer of GPT-3, Daniela Amodei, the deputy director of safety and strategy, and over a dozen trusted colleagues, making it a talent-rich team.

At the company’s inception, this talented team conducted extensive research and published multiple papers; a year later, the concept of Claude emerged alongside a paper titled “Constitutional AI.”

In January 2023, Claude began internal testing, and users who experienced it early on remarked that it was far stronger than ChatGPT (which only had 3.5 at the time).

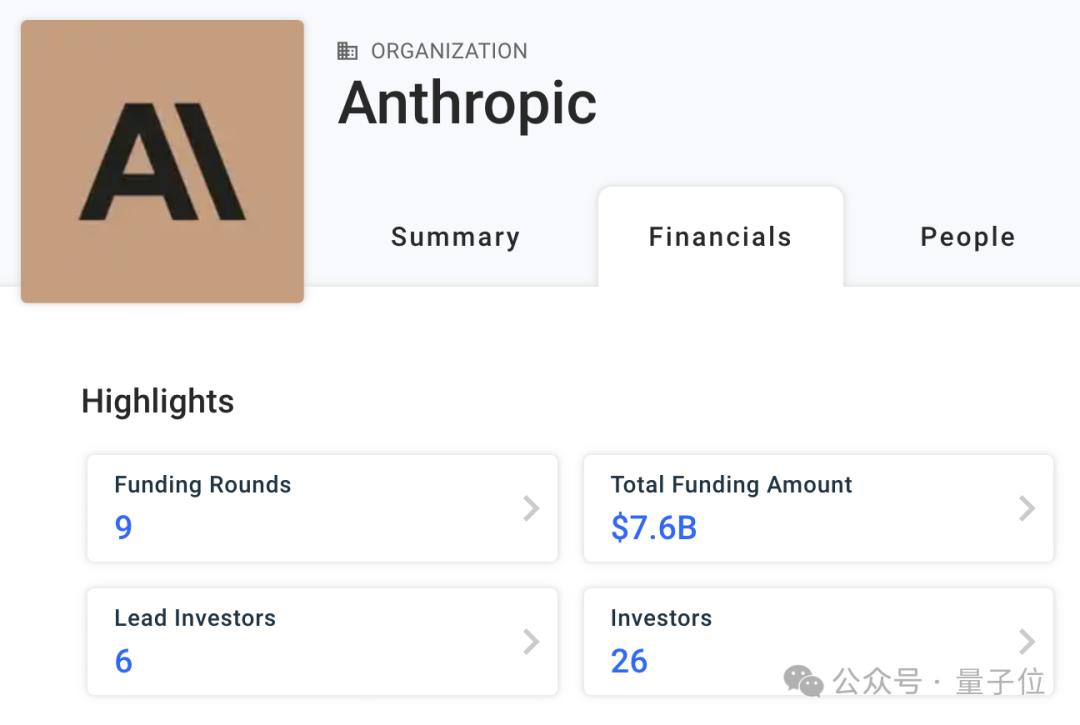

In addition to talent, since its establishment, Anthropic has also received robust backing:

It has secured funding from Google, Amazon Web Services, and 26 other institutions or individuals, totaling $7.6 billion. (Speaking of Amazon Web Services, Claude 3 is now also available on their cloud platform Amazon Bedrock, so besides the official website, everyone can experience it there!)

In conclusion, if we want to surpass GPT-4 domestically, perhaps we can take Anthropic as a positive example?

After all, its scale is far less than OpenAI, yet it has still achieved such success.

What directions can we follow to compete? What points can we learn and transform?

People, money, data resources? But once the latest and most powerful model is rolled out, where will the barriers lie?

At least since the popularity of GPT, the myth of OpenAI’s invincibility has been shattered.

Which Chinese player will be the first to fully surpass GPT-4? And the upcoming GPT-5?

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.